Adrian Crenshaw, a well-known InfoSec expert and author of Irongeek.com, provides a comprehensive overview of known darknets at AIDE Conference.

Hello everyone! My name is Adrian Crenshaw, and my presentation today is Darknets: An Overview of Attack Strategies. First of all, a little bit about me, if anybody hasn’t been at my presentations before. I’ll make this brief. I run Irongeek.com. I have an interest in InfoSec education. I don’t know everything – I’m just a geek with time on my hands; it’s possible that I get a few things wrong – if so, let me know, I’d be interested in knowing the technical details of what exactly is going on. And I am an (ir)regular on the ISDPodcast, usually every Thursday. I’m also a researcher for Tenacity Institute.

A little background: first of all, what technologies is this talk going to be about? There’s pretty much a million definitions of darknets, but my particular one is, essentially, “anonymizing networks” – generally speaking, the use of encryption and proxies, or systems of several nodes, where you cascade through them to hide who is actually who, who is communicating with who on a network (see right-hand image). Darknets are sometimes also referred to as Cipherspaces; I kind of like this term better because sometimes people use the term “darknet” when they mean only friend to friend, obviously, darknet in the broader sense of Tor in general, I2P in general, and so forth. I’ll be using the terms “Tor” and “I2P” a lot here – those are the two darknets I’m going to be talking the most about; they’ve got major deployment, so these are the ones that I’ll use whenever I use an example. Those seem to be the biggest contenders out of this particular space. When I say “contenders” I don’t necessarily imply competition; they both have slightly different focuses.

A few notes. A lot of this stuff gets subtle, a lot of the attacks get subtle, and getting it to actually function might be a crapshoot. Terms vary from researcher to researcher, so you go out there and read terms in academia, and you’re like: “Ok, what do you mean by this?” I’ve come across the terms “sybil” and “sockpuppet”. I used to be more familiar with the term “sockpuppet” – until I started looking into research and academia, I never heard the term “sybil”.

Many of these weaknesses are interrelated (see right-hand image): sometimes one weakness can be used with another weakness to greatly amplify the attack and be able to get past someone’s anonymity. There’s a bunch of anonymizing networks out there; just to name a few: Morphmix, Tarzan, Mixminion, and so forth. But, like I said, I2P and Tor are the two I’ve played the most with. Also, I’m going to try to go more real world with some of these attacks, where this can be actually used vs. some academic stuff. I will be talking about traffic analysis attacks, but I really think application level attacks is probably where the biggest risk is when using these networks.

Threat model – you can’t protect against everything. I mean, a darknet is not going to protect you from someone sitting in your house looking over your shoulder at your monitor. That’s just the way it is. It’s not going to protect you necessarily, depending on the darknet, from a state agency that can actually, in theory, get ISP records from all ISPs in the United States. Depending on who your adversary is, there’re different threat models (see right-hand image).

Some protocols are actually going to be a lost cause, I will talk about that here in a bit. Most of the people who are using these darknets are using HTTP-based protocols, but some of these protocols, like BitTorrent, unless you’re using a heavy modification – it’s kind of a lost cause.

Some users may end up doing things to reveal themselves. For instance, Tor’s model – if you go out and use protocols that give away your identity, or you use the same name on Tor-hidden services as you do on the public Internet, nothing is going to protect you. Or if you allow six billion different plugins in your browser and use Tor, there’s no guarantee that it’s going to protect you.

Also, different attacks give different levels of information. Some just give details about the Client/Host, who it is behind an IP address. If you have 2 IP addresses, it sometimes just reduces the anonymity set, and it reduces the total amount of people you could possibly be. Instead of being an I2P user, you might say it’s an I2P user in Indiana. That would be an example of reducing someone’s anonymity set. Or possibly because of the posts they make and the things they say or the time they make it, you can get an idea of where in the world they are.

There’s also active vs. passive attackers. It’s attackers that actually sit there and mess with the network to be able to find out more information. Passive attackers are people who just sit there and sniff traffic but don’t necessarily modify it. Location, location, location – this is kind of similar to the active vs. passive. It denotes if someone’s inside the network already, or they’re outside it, like an ISP.

Adversaries, of course, vary by power. The nation states: like I said, depending on how draconian the laws are in a particular country – that makes a huge difference; also the size of the infrastructure. Government agencies have limited resources depending on what they have; they may have the power, in theory, to maybe say: “Hey, give me all records from this, this and this ISP”, to figure out where the traffic is bouncing through. I’m not sure they’d be able to necessarily track it all down.

It could be someone who runs an ISP, or could be someone who runs a whole lot of nodes. I’ll be talking about sybils and sockpuppets later on. To give you a quick overview of what that is, though, it’s, essentially, someone who controls more than one node in a network, and they can have nodes collude to find out more information.

There’re also some private interests groups that might be interested, for instance RIAA and the MPAA – these people are going to be able to get a tap on your Internet connection directly. And there’re people like me, shmucks with extra time on their hands.

Now I’m going to briefly cover two major darknets, Tor and I2P, so that the rest of the slides make some kind of sense. Most people make node diagram: circle here, circle there, with lines between them. I don’t like to do that. I like doing something a little bit more whimsical. So, this is my idea of a node diagram for Tor (see right-hand image). Essentially, you have something that bounces around the network. You have, let’s say, three hubs. You might talk to the directory server; it will give you different Tor routes you can go through. You make a connection to one, make a connection to another, make a connection to a third one. And you make the connection through the circuit. That way they don’t know who you originate from. There’s a level of encryption here between the nodes, which I’ll explain shortly. Essentially, it’s called The Onion Router – Tor – because it has layers, just like an ogre. Think of Chinese nesting dolls.

I2P is a little bit different (see right-hand image). Here you have one-directional tunnels: you have “in-tunnels” and “out-tunnels”. You may have multiple ones. Your out-tunnel eventually goes into someone else’s in-tunnel. On the image we see the server in the left-hand part, this is my client here to the right. I can be going through and out my out-tunnel – back into someone else’s in-tunnel. They set the link of this tunnel, I set the link of that tunnel. I2P allows you to make compromises between latency and anonymity. Obviously, the more hubs you have the more anonymous you’re probably going to be, but the longer it would take. I2P makes traffic analysis attacks a whole hell of a lot harder, at least for my personal feeble attempts.

These one-way tunnels complicate things greatly as long as you’re doing traffic analysis. Who’s been in the military? Those who have should know about signals intelligence. Even if you do not know what the traffic is, you know this person at this base just sent a bunch of communication to these folks, and these folks start moving. Even if you do not know what the communication was, it tells you something. When doing traffic analysis, essentially, you’re watching traffic, even if it’s all encrypted – you know who’s talking to who, timings, and these other things that can possibly reveal information.

I2P is different from Tor in a few ways though. Tor, generally speaking, is supposed to connect to some website out in the public Internet, for example Tor hidden services where you can hide something inside the Tor cloud – I hate the world cloud, it’s semi-applicable here. I2P’s focus is hidden service functions, for instance eepSites, or a type of a service that you can hide inside I2P that is a website. But you can also hide other protocols like IRC and whatnot. Also, it layers things a little bit different, and one of its big focuses is to be distributed.

When analyzing Tor, I talked about the directory server. This directory server is controlled by the folks that created Tor Project. Other people can actually fork Tor and make their own sub-Tor networks by their fashion. If anybody has IronKey, they have their own Tor-based network. But since you have this complex infrastructure, somebody has to maintain control over it. If someone takes control over the directory server, that causes issue. Well, I2P wanted to avoid that, so they try to be very distributed.

I2P, like Tor, also has multiple levels of encryption (see right-hand image). You have at least three levels of encryption: essentially, between two participants that are trying to communicate; and also on the tunnel level, on “in” and “out”; and also between each and every single hub. So, in theory, no one but the end point and exit point can see what the traffic is supposed to be.

Now, here’s my silly garlic routing animation (see left-hand image). Essentially, this is what I2P does. I’ve talked a little bit about onion routing and compared it to Chinese nesting dolls. It’s similar in I2P. It sends something out to the exit point, or the end point of a tunnel, and that might be sent to someone else’s in-tunnel. So, this garlic is going out of your particular out-tunnel to multiple different in-tunnels. Unlike Tor, where we have one single circuit, once that hits the end of the tunnel, each clove of that piece of garlic is told: “Ok, you go to this particular end point of this other in-tunnel.”

That’s essentially how I2P and Tor differ. Now we’ll actually get to some common weaknesses. These are going to be semi-non-specific, just to give you an idea of some of the attacks that are out there against these anonymizing networks. Tor has a lot more foothold, but I2P is pretty good too. This first one is going to be more Tor-centric, it’s un-trusted exit points.

Essentially, what un-trusted exit point is – anybody can be a Tor router. So, if I want to be a Tor router at my home, I just set myself as an exit point, and some traffic will be routed through me and some will be the out-spot, which is traffic that comes to me and then goes out to public Internet. The problem is, depending on the traffic they’re sending, that might be unencrypted. It’s encrypted throughout the entire Tor network, but once it hits me and I’m the exit point, I can look at the data. Now, if they’re using extra level of encryption on top of that, like they’re visiting a site that’s using HTTPS – that’s much better, although there are still people who could use Moxie Marlinspike’s sslstrip.

Besides just looking at the traffic that’s going out the exit point, it could be modifying it also. So, imagine someone sets an exit point on Tor that injects malware into whatever pages you are viewing – completely possible. Or they can inject other things that can reveal your identity, which I’ll talk about here in a bit.

There have actually been incidents of this (see right-hand image). For example, Dan Egerstad and his “Embassy Hack” back in 2007. Essentially, he set some Tor exit points, or at least one, and a bunch of people in embassies who didn’t want the governments that they were in to spy on them decided to use Tor. But they were using non-encrypted protocols like POP3, where username and password were in plaintext once it hit that exit point. So Dan could sit there and sniff their traffic. This could also be web traffic, this could be tons of different things.

A few examples of plaintext protocols. These are protocols where there’s no encryption by default. Data might be passed in clear text or in easily reversible format like Base64: POP3, SMTP, HTTP Basic, etc. Also, Moxie Marlinspike was doing some similar to this with his sslstrip that I mentioned before. If you set up an exit point and use this, even though they’re using HTTPS, this tool would sit there and go: “Oh, I’m going to redirect you to HTTP.” If you’re not really paying attention to what it says in your URL, you could very well get owned.

To give you a quick illustration of how these un-trusted exit nodes work, let’s say you want to send some traffic (see left-hand image). So, this guy in the bottom left-hand corner is our client. Alright, which one of these machines do you think is the bad actor; which one’s evil? The one with the goatee of course. You’d better watch more Star Trek. Anyway, we go to the first hub, a layer gets stripped off; we go to next hub, a layer gets stripped off, etc. So it’s encrypted throughout the entire way, but each point only knows the person who just sent it to him. So, in theory, no one knows both the content and the original person who sent it in. That gets sent out to exit point, but at that point it’s clear text. The guy can sit there, look at it, sniff it, modify it, and send it back.

Mitigation. Tor is for anonymity, not necessarily security. If you use end-to-end protocols that aren’t necessarily encrypted, the guy at the exit point can see your traffic, just like someone sitting on your Local Area Network. So, don’t use plaintext protocols. You should send it end-to-end encrypted. Also, when you’re using your usernames and passwords through these protocols, you are not really anonymous, are you? People who are using public email addresses through Tor network – not so good.

Alright, some other common attacks: DNS leaks and various other protocols, and application level problems. An overview: does all the traffic go through the proxy? Okay, you’re using a darknet, everything is encrypted, and you’re using multiple hubs so no one really knows who’s talking to who. But what if you’re sending all of your through there, and the protocol is not quite right and it’s sending stuff outside the darknet? A common example is DNS leaks. Let’s say, you’re using Tor to visit SecManiac.com. So, I’m visiting SecManiac, and I think I’m secure, all my traffic to him is encrypted and it’s encrypted back, but I don’t want people to know that I’m visiting his site. Well, if there’s a DNS leak, they might know. I might be sending traffic through Tor, but if my machine is not configured right it could be asking the DNS server: “Hey, what’s the IP address of Dave’s box?” So, my ISP knows I’m visiting Dave’s site; they may not know what I’m looking at, but that’s still information. If you are visiting a Tor-hidden service or I2P eepSite – same thing: there will be some string of characters, .i2p or .onion, and if you’re not configured right, those hidden services will be recorded to a DNS server.

So, basically, you need to make sure that the darknet is configured (see left-hand image). We’re talking kind of dual purposes here. I’m talking, in some sense, about how to protect yourself when you’re using a darknet for anonymity, and how to catch someone who’s using it. It’s kind of an interplay, or game of chess. I’m sort of preaching the both sides here, and I think the topic is interesting.

A snooper can also use web bugs, depending on how you have your proxy set up. Let’s say, you have your browser set to send all HTTP traffic through Tor or I2P, but you forget about HTTPS, or possibly FTP. That would be an issue, because a person could use that to embed a link inside a web page and find out who you are. I have some examples of web bugs, but since a web bug can be something like an image you put on a web page, the IP and various other information is reported to the service hosting that web bug. You can use that kind of thing for tracing people down.

HTTPS is a good example, but there’s other application level stuff that can be a problem. JavaScript also is pretty hosed. I’d recommend watching Gregory Fleischer’s Defcon 17 talk, it basically makes you go: “Yeah, JavaScript is a bad idea from the very beginning,” as far as security is concerned, especially anonymity in this case.

Let’s talk about DNS leaks for a second (see right-hand image). Here we have a DNS query, let’s say that’s to some .onion address, or .i2p, or even secmaniac. Even though all my traffic going through the network might be encrypted, that doesn’t necessarily mean that this person – monitored DNS server – doesn’t know who I’m visiting. They might not know what I’m doing, but they know who I’m visiting – that can be bad enough.

A few ways of mitigating these kinds of problems (see left-hand image). In Tor and I2P, someone might want to put a sniffer and use libPcap filter like port 53 to find both TCP and UDP packets and see if any kind of traffic is leaving that shouldn’t. In theory, if Tor is configured right, or I2P is configured right, you’re not going to see that traffic, hopefully.

If you’re having some problems, one thing you might want to make sure, at least in Firefox, is to go in there and make sure this particular setting in ‘about:config’ is set to true so it knows to use DNS through the SOCKS connection, through the proxy. This gets a lot more complicated in other protocols, for example when you configure it in IRC client, make sure it does name resolution from IRC client to IRC client. Same thing with Secure Shell, but you’d better be damn sure you have that proxy setting configured correctly.

Torbutton should help. When I use Tor or I2P, generally speaking, I use Tor browser button which has all settings already done for you to help with anonymity. Also, don’t use a bunch of plugins – that’s another big one. Other applications may vary of course. You may also want to try firewalling off port 53 and make sure it can’t go any place, and then the only way out of that particular box is the proxy.

Also, with Tor, one of the things you can do is you can actually set up a local DNS server on your box, and then, if you’re worried about DNS traffic going out, you can point your local machine’s DNS server to point to localhost, and it won’t talk to a Domain Name Server. So, that’s a nice and fairly secure option. And you can make that little setting by editing your torrc file and putting in those flags.

Alright, grabbing content outside of the darknet – this can also be an issue. In this illustration (see left-hand image), I have more of an I2P kind of style node diagram. Let’s say some traffic is being sent through. If someone doesn’t have this configured right, they could be getting HTTP traffic through on I2P, but you might get HTTPS through it. I2P does various modifications to the traffic to try to increase your anonymity, and you can’t really do that on HTTPS. There have been HTTPS out-proxies, but I’m not sure there’s any at this moment. Let’s say you’ve only configured an HTTP one. That traffic may be going through I2P and bounce around and come back to you, and they don’t know who you are. But that web page hosts a file, a web bug that is accessed via HTTPS – then it’s possible that when you request that you get your page back; you request the image, and then they have your real IP address.

To prevent that from happening, in I2P I used to go in and set the option: “Use this proxy server for all protocols” (see right-hand image). This particular setting isn’t necessarily going for all SSL traffic, as I recall. You can also set SSL proxy that should work. I don’t know if that particular I2P proxy is actually up and running at this point.

Okay, slightly related subject (see left-hand image). Let’s say you’re web surfing around and you visit some website and you’re like: “Hmm, I want to do something a little bit more suspicious.” You’re visiting public Internet first, then you decide to visit using Tor later on when you’re up to doing something you don’t want to be known. Well, if you’ve got a cookie while you’re on the public Internet, and if you’re using the same web browser when going the same place using Tor, you might notice you’ve got the exact same cookie again. Torbutton has various features along with Polipo, this little HTTP proxy that’s meant to filter various identity revealing information out of your HTTP traffic. It might be a concern though if you don’t have Tor configured properly. If you get a cookie off the public Internet, then if you use Tor and go to the same page – the same cookie gets sent.

You can as well make a hidden server contact you over the public Internet (see right-hand image). The last few examples I’ve given have been: you’re client and you’re contacting some Internet site. In this case, you might be trying to reveal the identity of some hidden server. This is a server inside I2P network that you don’t know its real IP address. You know its name but it’s bounced around in between hosts inside the network to be able to get to it. If you can contact it, let’s say with an exploit, for example you have a shell execution exploit or some bad web vulnerability on there – if you can tell it to pin you – well, game over, it may pin you from its real IP address. There’re mitigations for this though.

Another example of applications that suck at anonymity is BitTorrent (see right-hand image). There’s a paper written a while back, where they found that most Tor users are only using Tor to hide the contacting of the tracker. Well, if you’re only establishing communication to the tracker over Tor, it’s still contacting the peers directly – that’s revealing your identity right there. Also, though, let’s say the person decides to modify the data, they could add their own IP addresses: “Yeah, I’m one of the people who’s participating in this BitTorrent; contact me,” and when you contact them there’s various identity information that lets you go: “Oh, that’s who you are!”

Also, depending on how the client is configured, another common operation for BitTorrent is to use what’s called distributed hash table – that’s over UDP. Well, Tor doesn’t really support UDP, so that gets out to the distributed hash table and that can be scraped for information. Most of these are mitigated if the person decides to send all traffic, including peer-to-peer traffic through Tor, but that would be really slow. But the distributed hash table one – that’s not mitigated, because if your machine starts using the tracker list Torrents and sending those packets out via UDP, if someone’s out there harvesting distributed hash table over the Internet they could possibly reveal people’s identity.

So, there’s all sorts of information inside the BitTorrent protocol that reveal who is who. And all this traffic actually has peer ID and port number, so just from the ground up it wasn’t exactly designed for anonymity. There are still modifications that have been done to the one that exists inside I2P that makes it a lot better. But generally speaking, BitTorrent over Tor is not really such a great option.

Ok, yet another example of application that screwed the pooch, so to speak, is IRC. By default, even if you configure IRC to go through Tor or I2P, there are some things on the protocol that will screw you up (see right-hand image). Who is familiar with Ident? Inside of IRC clients, you can say: “What’s your Ident?” So, if someone does a Whois on you they can find out your username on some box. Well, you can set this information, but depending on the client you’re using it may default to your actual username. For instance, one time I connected to I2P as “hidden” and started looking around who everybody is, and I realized that while I had a pseudoname, while I was using I2P, if someone does a Whois on me they can see Adrian@(some hostname), so they’re contacting one particular identity inside of I2P.

You can fix this kind of problem by actually going in your IRC client and configuring what you want returned as Ident information (see left-hand image), but by default, depending on the IRC client, it may reveal more information than you actually want.

Alright, general mitigations (see right-hand image). Make sure your browser is set to send all the traffic to the darknet; I illustrated some of that a bit ago. Look into Firewall rules to block all traffic that’s not going out through the particular ports that you know your darknet client is using. Limit plugins used, of course, because this can totally mess you up; a plugin can be used to reveal more information about you, or it can get you to contact the public Internet and as a result know your IP address, which may reveal your identity in the long run. Use a separate browser for different tasks. Also, there’re two great sites to go and check out how anonymous you. Decloak.net tries a bunch of different techniques that reveal who you are. Panopticlick from EFF is somewhat different; it basically tells you how unique your particular browser user-agent string is, as well as various information that JavaScript and plugins return to the site. So, it can say you are unique amongst so many different people, or how many people share your exact identifier.

Hidden server-wise, make sure you patch your stuff. If you have a really out-of-date version of some web application, then someone can use some sort of shell injection. Also, you could just not run the box on the public Internet, have it on its own virtual host, let’s say it’s VMware. The web server is configured to only respond to 127.0.0.1 and that can send traffic any place else. But the service that is coming in via the darknet is allowed in, the idea being to make sure it can’t contact anything else outside of its own little network.

Ok, attacks on centralized resources, infrastructure attacks, and denial-of-service attacks (see right-hand image). This is not so much against individual nodes as the network in general. I suppose you could try doing denial-of-service, individual hidden service, or eepSite in your darknets, but more likely a lot of the attack will be blunted by other host in between you and them taking the damage. When it comes to staying anonymous when you’re DDoS’ing a site, people say: “Why don’t you use Tor to hide who you are?” Because if you try to DDoS through Tor, it would end up, essentially, being a denial-of-service on Tor, you wouldn’t necessarily contact what you’re trying to hit. Well, you would, but at a greatly diminished ability.

There’re all sorts of denial-of-service attacks out there. Starvation attacks is where you can promise some nodes resources but not give them. Partition attacks is where you can cut down the anonymity set of what you have to search. And flooding – the general DoS sort of attack. Attacks on shared known infrastructure can be a problem: if you decide to denial-of-service Tor’s directory servers, that would be a huge issue because then people won’t be able to use the network. Also, total or severe blocking of the Internet would be a huge problem because you’re not going to be able to use the darknet.

There have been a few cases. For instance, China back on September 25th, 2009 blocked access to Tor directory servers, so people who were using Tor in a normal fashion couldn’t connect, they couldn’t find a list of routers to hop through. Also, Egypt, Libya and Iran would block Internet access – well, you can’t get into I2P if you can’t get into Internet access.

If someone blocks the connection to Tor director server – well, you ain’t using Tor. With some exceptions, and I’ll talk a little bit about bridge nodes. Essentially, a bridge node is a Tor router that’s not advertised directly. You can email a certain email address at Tor Project for a list of bridge nodes you can contact. I’ve known cases when you get a bridge node and use it inside the country and then tell other people about it inside the country, and they can use you.

Distributed infrastructure helps, for instance I2P. There’s no directory server to say which node is which. It’s all just taken care in a distributed hash table called NetDB. Taking out the dev site might be kind of an issue, but it’s actually development and I2P is supposed to be also done over I2P, so that might be somewhat difficult. Protocol obfuscation might also help; if someone doesn’t know that someone is using a darknet, they might not attempt to block it. Tor does it to an extent by making traffic look like HTTPS, though there’s a lot of stuff it sends that obviously isn’t. I2P sends out via random port; different I2P users aren’t necessarily using the same port. And when you start looking at the traffic, it should just look gibberish. So, that makes I2P fairly hard to block. It’s also sending stuff via UDP and TCP. Total or severe blocking of the Internet though – that takes a little bit more to mitigate. There are some people talking about technologies being able to do that. If someone blocks all Internet access, that’s a bit of a difficulty.

So, people look for ways of getting around that. People end up making ‘mesh’ and ‘store and forward’ networks (see left-hand image). Essentially, these meshed networks might have different boxes in a country with radio communication with each other. If you can contact one of them, you can hopefully get a message out by hopping around until it eventually gets someplace where it gets to the public Internet or whatever network resource you’re trying to go for. With store and forward, if you’re for instance trying to send a message out, and real time is not necessarily something you have to worry about, let’s say it’s an email – if it arrives now or it arrives in two hours may not matter. So, if the message is sent from a phone, it gets sent to a node and then gets into another node, each coming into range of each other – that might be an example of store and forward.

More info on mesh networks: there’s no need to create darknet backbone. Kit-Alpha is one of the projects you might want to look at. Also, there was an article on The New York Times not too long ago about the U.S. actually sponsoring some research in this particular area (see right-hand image).

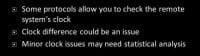

Alright, clock based attacks: this is another place where people can at least reduce the anonymity set of someone using a darknet. Some protocols allow you to check remote system’s clock. This can be an issue if, let’s say, they’re using local time – well, there are places in the world where it’s 3pm at any one moment. That gives you an idea of where the person is in the world; that reduces the anonymity set. Also, sometimes people have clocks that are just playing off and don’t have them automatically updated via a time server. So, that would be an issue. Minor clock issues can sometimes be statistically analyzed to figure out where somebody is. Someone did some research and tried to figure this out based on temperature. Basically, temperature of area of the world they were in at that time will have effect on a computer’s clock, how fast or slow it ran. And they tied it to statistical analysis to figure out where in the world the person was based on that.

Now, some research that was done, as I recall, was in Steven Murdoch’s paper (see left-hand image). He used his own internal lab Tor because the public Internet Tor is not as stable to get accurate clock information. I2P clock differences is a less accurate method and more of just checking clocks and seeing if they are way off from where they should be.

When I was doing my research on I2P I checked out various eepSites and tried to see how many seconds difference they were from me in time (see right-hand image). Well, if it’s only a few seconds, that could be easily explained by network jitter, because all of these hubs you have to go through in your darknet might be causing the latency issue and that time difference. However, if there’s only one of the hosts that’s, like, 4000 seconds difference from me and I’m getting a response time of only less than a second – I have a pretty good idea, considering that the clock is that much off, that that’s who it is.

Actually, I should explain this table a little bit better (see left-hand image). I did a harvesting attack where I sat there and logged every I2P user I could, because I have the distributed hash table on my machine as far as information about routers I can connect to is concerned. I logged all that, started hitting all those IP addresses to see if they had a website on them; if they did, I learned what particular web services they were running and what time they had on them. Once I had that information, I would also try to contact eepSite I knew about and see if I could correlate them. An example of that might be an attack like: “Hey? What time is it?” Based on the response, you might have a good idea of who it is.

Mitigations – well, depending on how far off the clock is, I think this attack can be fairly hard to pull off because it takes time to proxy that connection from host to host to host. If the clock is severe off, that’s probably not going to be a severe issue, I imagine. Having the clocks set with a reliable NTP server would probably help. However, if you set yourself to an unreliable NTP server, that’s not going to be that good. Some mitigations can take place in the darknet protocol itself, where, for example, I2P makes sure that people aren’t too far off from each other. However, that particular timestamp is internal to I2P itself; it doesn’t reflect the time of the hosted machine.

Another cool example of where people can reveal identities inside darknets is metadata (see left-hand image). Essentially, metadata is data about data. This could be stuff like, you know, the GPS coordinates of where it was taken, or what the username of the person who created it was, or timestamps on when it was last modified, or when it was created initially. Lots of document formats have metadata in them, for instance, JPG, EXIF, IPTC, DOC, DOCX, EXE – all of these have metadata in them. Some of the things stored are GPS info, sometimes network paths. Basically, you can figure out some information on people’s network just from the documents they put out on a website. Way back in the day, around 1997, I was actually embedding MAC addresses inside documents.

A few problem samples. I can’t think of any people that said “Darknet has been revealed by metadata,” but here’s a few on the public Internet who had some problems with metadata (see right-hand image). First of all, Cat Schwartz; she posted a picture of herself online. The problem is, EXIF data inside of JPGs also has a thumbnail. Well, the thumbnail didn’t get modified when she cropped the image, and it went down a little bit further. So, there you go!

Another example is Dennis Rader, the BTK Killer, who wasn’t being caught for years and years. He sent a floppy disk with a Word document on it to the cops. They get it and they look at the metadata, and it says something “Dennis”, and the software he used was registered to a church he was working at, it had the church’s name. There were not many Dennises in that particular church, and it didn’t take too long to figure out who he actually was.

Another example is Nephew chan. At one point in time, on 4chan he posted an image of his aunt who was in the shower. He posted the image from his iPhone, and people managed to pull the GPS coordinates and were able to figure out where he actually lived.

Alright. Mitigations – well, duh, clean out metadata. And of course, there are various apps, so I can’t give you one size to fit all solutions for that.

Ok, local attacks – at this point, it’s probably a lost cause; somebody has to seize the machine (see left-hand image). One of the nice things about the Tor Browser Bundle is that by default, as soon as you close it, it handles history, or cookies, or anything like that. If they have access to your local box, you’re hosed; if someone has physical access to your box, it’s no longer your box. Essentially, at this point it comes down to traditional forensics: data on the hard drive, cached data and URLs, memory forensics, if everything else fails.

Does anyone know what a cold boot attack is? Alright, cold boot attack was this: someone had the encryption keys, the encryption keys were up in memory, and they started to strip down the machine real quick. Well, within a certain amount of time someone would pull the data off of the machine and recover that key. To a degree, this particular attack is only academic, because you have to do it really-really fast. For instance, you take your laptop and just hold it out, like, for 20 seconds and keep it away from somebody, and they’ll probably have a problem recovering data off of it. There’s a guy who has been doing some research on doing forensics on live CDs, where memory forensics comes into play.

As far as mitigations are concerned (see right-hand image), there’s of course anti-forensics – you don’t leave logs on a machine in the first place. That’s a great start. Also, people who use live CDs or live USB drives can avoid leaving some tracks since the CD is write-only media, you don’t have to leave logs on it necessarily. Same case with a lot of USB drives – as soon as you pull it out and reboot the machine, in theory, everything’s gone. Andrey Case was messing around with actually doing forensics on memory. So, let’s say someone seized the machine while it was using a CD or while it was using one of these boot USBs, they could actually grab data from memory and figure out what the person has been up to. Of course, full hard drive encryption would also go a long way mitigating all of this.

Okay, now we’ll get into some more academic attacks – sybil attacks (see right-hand image). The term comes from the book called Sybil which about multiple personalities. Essentially, it’s like a sockpuppet, where someone’s posing as more than one person. The idea is about being more than one person on a network. A lot of times these are not necessarily attacking themselves, but they make other attacks easier because you have more than one node.

But let’s say this one guy in the corner (see left-hand image) is evil, he decides to set up more than one node that he controls. You can have these collude to find out more information. For instance, let’s say you are incredibly unlucky and he has Tor and I2P mitigations against this. Or you’re incredibly unlucky while using Tor, and you can add these three boxes as your routers, and all three of those are controlled by the exact same person – well, you’re hosed.

Mitigations: there’s no absolute fix to this (see right-hand image). You can make it cost more to have nodes in a network. Who has heard about proof of work algorithms? Way back, there was a spam fighting initiative, where, before any kind of message would be accepted, you had to solve some mathematical algorithm that was easy to check but hard to do. But there are, I guess, logistical reasons that this never really took off. The same technique has been used in other places though, like Bitcoin for instance. Another example might be password hashes: if someone gives you the word, it’s easy to check whether it matches the hash, but taking that hash and figuring out what the original word is – that’s doable with massive brute-force but not necessarily time practical.

I2P and Tor both put in a restriction to where they try to keep the same /16 IP addresses from being in consecutive hops. For example, let’s say the IP address of your institution is 123.123.something.something, it would try to keep those two from being one hop and the very next hop; the idea being that if someone wants to try to make a bunch of colluding nodes, those might have their own little IP network by themselves. So, basically, they try to keep those separate.

Central infrastructure may be more resilient to this, however it has its own issues. If one central point is deciding who is who and who is doing what, then that’s one point of failure, but if you really secure that point, in some cases that might be a mitigation against sybil attacks. Both I2P and Tor have peering strategies to try to keep you from talking to people consecutively who might cause you an issue. There’s been some academic research done on things like SybilLimit, SybilGuard, and SybilInfer that try to determine who you connect to based on who you know. Who is familiar with Robin Sage? What one security researcher did is he made, if I’m not mistaken, a Facebook profile of this very cute girl who was allegedly an information security researcher, and he was trying to see how many people in the industry he could get to connect and contact her. It ended up being a ton of people to add.

How many people out here have people in Facebook who they barely even know? I’m thinking using social networks as a way of controlling who peers to who may not be the best issue in the world.

Alright, traffic analysis attacks (see right-hand image). There’s a lot of academic work on these. Unfortunately, or fortunately, depending on how you look at it, it takes much more power of an adversary to pull them off. I really think that if you are using a darknet you should probably worry more about application layer stuff than revealing your identity. There’re a lot of subtle variations on profiling traffic. This could be something like timing of data exchanges, could be the size of the traffic, it could be the ability to tag traffic. Let’s say, the encryption algorithm allows people to still modify the data; if someone can tag it and change the data, they can track it throughout the entire network. Generally this takes a powerful adversary. It can be somewhat hard to defeat in “low latency” networks.

Regarding low latency networks – there’re networks out there that would be kind of store and forward, where, let’s say, it’s a mail message. It hits a node; if it takes 10 seconds to get to next node or if it takes an hour – for email it doesn’t really matter that much. However, for web traffic, that really just wouldn’t work. A lot of these attacks involve timing, where some time correlation can be used to determine who is talking to who. When I was looking at I2P I was thinking how you could possibly do a traffic analysis of this, I mean, there’re so many people talking to each other through one-way connections (see left-hand image).

But from ISP’s viewpoint, you can only have one connection in and one connection out (see right-hand image); they can kind of sit there and watch traffic. I was just sitting there in Wireshark and trying to figure out which peer was which. And I knew the right answer because I was able to go into I2P itself and see who my partners were in communication, and I still had issues.

To give you an example of correlating traffic, let’s say some client sends 5MB in and another one receives 5MB and sends out 8MB, and the former one receives 8MB – even if you can’t read the data, you know who’s talking to who (see left-hand image). It could be things like timing that can also reveal information in this context.

Also, things can be done to affect timing (see right-hand image). This is where sybil attacks can help augment traffic correlation attacks. Let’s say someone is sitting there watching the timing; that can reveal information. They can also just sit there and kind of control how fast the traffic goes through them; this can be similar to a tagging attack. The way I2P works is it signs the data, so if someone is modifying the data it’s going to be an issue. I supposed if you slow down the packets and put a certain rhythm to them, you might be able to follow there along the line. There’re also people who have done various attacks in Tor, changing the load on certain nodes to figure out who’s talking to who, or figure out who’s going through which nodes and reduce the anonymity set as well.

Mitigations for this (see left-hand image) would be things like more routers. The bigger the network is the harder it would be to find the smaller needle in a much bigger haystack. Also, people talk about using Entry Guards: if you’re both in Tor, the first hop and the last hop in a network, it’s very easy to figure out who you are and what data you’re sending. If the attacker is the exit point, they’re seeing unencrypted traffic, assuming you’re not using an encrypted protocol. If they’re also your first node on the entry point, they see the amount of traffic you’re sending, and it’s much easier to figure out that this person is the person who was sending out this data coming out of this exit point.

So, Tor does a couple of things to mitigate this. One would be Entry Guards that chooses a certain set of people that always contact. If it randomly chose people to peer through every single time, eventually the attacker would be both the exit point and the first node to hop in to. However, by choosing a certain set that you always use as your entry points, possibly you would have really bad luck and choose malevolent peer the very first time.

One-way tunnels can help because they definitely seem to confuse information, at least when I’m trying to sniff traffic in I2P. Short-lived tunnels may help so that you’re not sending as much traffic through the same nodes. Basically, you use these sets of nodes to route through for a little while, and then I’ll set and change it to a whole new set of nodes. Better peer profiling to figure out who’s bad actor, like if you know what person only tends to send traffic in certain ways or at certain times. Signing of the data – I know I2P does signing of the data to make sure it hasn’t been modified; I’m pretty sure Tor does as well. Fixed speeds are another issue; some networks have been proposed to keep doing timing attacks, and if they see that some people are always sending data at same speed, that could help set some correlation.

Padding and chaffing – if you’re sending data out there and you’re worried about people doing analysis on the amount of traffic you’re sending, if it’s padded it’s always the same size. Chaff would be kind of the opposite thing, like, someone sends out a bunch of data that’s padded, and now they’re all of a sudden dropping off the unneeded data before they send it out to the next node; so the sizes of packets going from this node and this node can’t be easily correlated. Non-trivial delays would help in some cases, and that goes back to some of the stuff I covered earlier.

Intersection and correlation attacks – this can be related to some of the earlier attacks as well (see right-hand image). This can be as simple as knowing who is up when a hidden service is available. Let’s say you’ve logged all the people you know inside I2P. And you log whenever this particular eepSite, the hidden web server, is up. If you notice that one particular I2P router is down at the exact same time that this eepSite is down and it’s always like that, that might be an example of a correlation attack you could do.

Techniques can be used to reduce the anonymity set. I suppose when someone starts knocking off various machines on the Internet, like you did it and eepSite is still up – ok, that must not be you, and so on and so forth. Application flaws can also reduce the anonymity set. I’ve mentioned before, when I was doing some research on I2P I was checking for what particular web server software each machine was running. Well, if I log all this and I know you are running this particular version of Apache, I can only check boxes that have that particular version of Apache. I’ve reduced the anonymity set, I’ve reduced the number of boxes I actually have to check to see whether or not it’s the same person. This also goes back to harvesting attacks, where you profile the different nodes in the network.

Here’s an example of a simple correlation attack (see left-hand image). Let’s say they’re trying to contact the Tor-hidden server. They go and check to see whether or not it’s up, and then they check every other node in the network really quickly to see if they are up. Eventually, if one is down at the same time as the hidden server, that might give you an idea that that’s same person. Within a really big network, this attack would be difficult to pull off. But it’s a really simple example of a correlation attack.

Let’s say you know the IP addresses of a bunch of the routers, and you want the routers that actually host an eepSite (see right-hand image). You might be able to find out what software that eepSite is running. Then, all the IP addresses you’ve harvested out of the distributed hash table, you can check each one of those to see whether or not it’s running the exact same version of the software. Then, each one that is running the same version of the software, you can request that site. For instance – this is one of the attacks I was doing – let’s say there’s some site called somesite.i2p. I might request it directly and go: “Ok, this is the certain software that you’re running and you’re returning that information to me.” Now I want to see who has the same server software, and in my host header, in my HTTP protocol, I’m going to request that particular website from you. If you return that website to me, I know it’s you. You could actually de-anonymize some people in I2P that way.

General mitigations – of course, more nodes would help. The more nodes there are the harder it is to pull off these attacks. I think I was only dealing in I2P with around 6000 nodes at a time, and it was doable on a home machine with a cable modem, but the more nodes you have the more difficult that would be. If you’re hosting a server inside I2P and it’s an HTTP server, it strips out that server software header, so it’s not as easy to correlate. Giving less data, of course, makes it a lot harder to pull off these kind of attacks because you can’t reduce the anonymity set, you have to check more nodes to see.

Another thing is to make harvesting attacks harder to do. For instance, it’s easy to access a Tor router because you can access the directory server and it gives you all that information; however, you can’t easily harvest bridge routers because they don’t put all information in one place. That might be an example of making harvesting and scraping harder to do.

Ok, we’re almost done. If you want to have more information on various research into anonymity networks (see left-hand image), check out the archive that Freehaven has. Also, if you want more information on different threat models, I2P has a great page on that. I have a general darknets talk that I did earlier here at AIDE. And I also have a video and article on de-anonymizing eepSites inside of I2P.

I’d like to say a few thanks to the conference organizers for having me here; Tenacity for helping me get to Defcon; my buddies at Derbycon and the ISDPodcast; and also the Open Icon Library for helping me out with a lot of the “artwork” (see right-hand image).