James Denaro, patent attorney at CipherLaw, delivers a presentation at Defcon highlighting the legal risks InfoSec researchers might run into in their activity.

James Denaro, patent attorney at CipherLaw, delivers a presentation at Defcon highlighting the legal risks InfoSec researchers might run into in their activity.

The topic for today is how to disclose or sell an exploit without getting in trouble. I’m Jim Denaro. I’m an intellectual property attorney based out of Washington, D.C. I focus my work exclusively on information security technologies.

So, here we go. Because I’m an attorney and this does have some legal component to it, although this is not a law talk, really, I have to give the standard disclaimer (see right-hand image) that this presentation is not legal advice about your specific situation or your specific questions. Even if you ask me a question – we are still talking about hypotheticals – if we develop an attorney-client relationship, then we’re talking about your specific problem and giving specific legal advice. So this presentation does not create attorney-client relationship alone; we can maybe do that later.

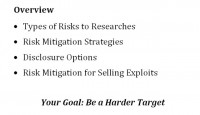

This (see left-hand image) is a quick overview of what we’ll try to accomplish here. We’re going to cover the types of risks that are being faced by researchers; risk mitigation strategies that researchers can take to try to reduce those risks; some of your options for disclosing a vulnerability that may have less risk; and then some of the risks that are associated with selling an exploit. The overall goal of this is to make yourself a harder target. If someone ever asks you: “Can I be sued if I do this or if this happens?” – the answer is always “Yes.” You can always be sued by anybody for anything at any time. The only question is who is going to win. And the goal is to make it more likely that you will win, which disincentivizes someone from actually suing you in the first place.

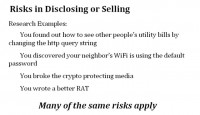

So, let’s start out with just some great examples of the kind of research activities that might get somebody in trouble (see right-hand image). These are generally real life cases. For example, you found out how to see other people’s utility bills by changing the http query string. I talked to someone at the party the other night who had done just exactly that; he was wondering what to do about it. Another example: you discover your neighbor’s WiFi is not protected. How did you find that out..? Yet another instance: you broke the crypto that’s protecting some media that you had. It’s getting a little more serious now, that’s actual money at stake. Maybe you wrote a better remote access tool – that sounds like you might make a lot of money.

Many of the same risks apply, surprisingly enough, whether you are just looking at changing http strings or you are actually taking apart a DVD. So, in general we’re talking about techniques; I’ve sort of defined it here (see left-hand image) broad spectrum: everything from a technique that might be used for denial-of-service attacks, to something that’s sort of investigatory web browsing.

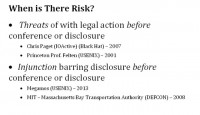

Okay, first, when is there risk for a security researcher? There are three general areas where we see the risk starting to show up (see right-hand and bottom image). One – there can be a threat of legal action before you go to a conference or make this disclosure. There’re some examples listed here. You might be the recipient of a legal action seeking an injunction barring you from disclosing something before a conference.

So, now we move from merely saber-rattling to an actual lawsuit being filed against you. And then, there’s a possibility of a legal action being initiated against you after you make the disclosure. And these are all real examples.

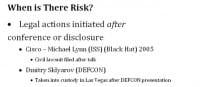

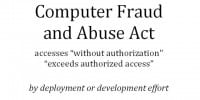

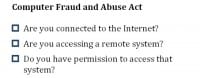

Your #1 concern is typically going to be the Computer Fraud and Abuse Act (see right-hand image); you’ve probably heard a lot about that lately, perhaps here or at other conferences. The main issue is that it prohibits access “without authorization” or “exceeding authorized access”. The two times when you’re likely to run into possibly exceeding authorized access or acting without authorization would be in the investigatory phase of working on whatever technique it is that you’ve got, and when you actually create a tool that performs whatever this technique is. You might actually have a problem where that tool does the act that is prohibited.

Everyone’s talking much about how vague this notion of “authorization” is in the Computer Fraud and Abuse Act. I’ve created a handy checklist (see left-hand image) to figure out if you might have a Computer Fraud and Abuse Act problem. So, there you go. Are you connected to the Internet? Probably. Are you accessing a remote system? Probably. Do you have permission to access that system? This is the real hard question – it’s really hard to know if you have permission. If you saw a banner go by that said: “You don’t have access,” you probably don’t have access. But there are a lot of cases where it’s not so clear, and that’s really where you have sort of the Andrew Auernheimer situation, where he’s querying a public-facing API on a repeated basis; no one asked him to do that, but there’s no banner, there’s no clear prohibition of doing that – it was a public-facing API after all. Really, there’s some risk in figuring out whether or not you have permission, but that’s really all it takes.

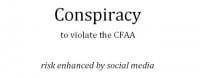

Unfortunately, it’s not just about what you do. The Computer Fraud and Abuse Act is about what your friends do. And I believe the risk of being caught up in conspiracy to violate the Computer Fraud and Abuse Act is certainly enhanced by the prevalence of social media today. So, if you’re on Twitter or some other very easy-to-use social media platform, you’re talking to your friends about how you might do something or answering questions about how you might do a certain thing with a technique that you’ve developed – you’re starting to head down the road of conspiracy.

Conspiracy typically does require an overt act in order to really fulfill the conspiracy, and typically just discussing something with someone does not. But if you start providing technical support for something that someone else is doing, you’re definitely increasing the risk of being caught up in a conspiracy to violate the Computer Fraud and Abuse Act, if not actually violating yourself.

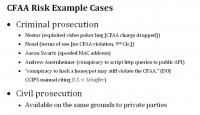

We’ve got some examples here (see right-hand image) where CFAA has been applied. I think it’s helpful to look at some examples because that’s how we see how it’s being applied, and we can compare what we’re doing to some of the things that have happened in the past to other people, and see how close those comparisons are. And since we’re in Las Vegas, we absolutely have to talk about the case of Nestor.

Nestor was really into video poker, and he liked to play and play and play. He got really good at it. He played it so much that he discovered a bug in the video poker software that enabled him to play one type of game and bet a bunch of money in that game, and then switch to a different game, and multiplier would be applied to his bet. So, when he won he got to see enormous payout. And he figured out how to reproduce this bug very efficiently. So, he was doing it and his friends were doing it and they were getting a lot of money. And obviously, eventually – how these stories always end – he gets caught. And he was charged with, amongst other frauds, violating Computer Fraud and Abuse Act.

As we were looking at CFAA a few moments ago, we saw it’s really mostly about unauthorized access, or exceeding an authorization that you had. And it’s hard to imagine how setting hidden access to firmware – he didn’t take the game apart, he just sat there putting money in and pushing the buttons on the surface of machine – how you could exceed authorized access to a video poker machine is absolutely mind-boggling. But nonetheless, those charges were levied against him. Ultimately, the Department of Justice did not pursue those charges, those charges were dropped. They went ahead with other fraud charges, but nonetheless for some period of time he was facing Computer Fraud and Abuse Act charges for doing exactly that.

It’s also worth looking at the tragic case of Aaron Swartz who spoofed his MAC address to download journal articles – that was a Computer Fraud and Abuse Act crime. Andrew Auernheimer who allegedly conspired to run an automated script to plug in identifiers for iPads and get email addresses. He’s doing several years in federal prison for that.

Also worth noting that Department of Justice has said in their manual about Computer Fraud and Abuse Act that conspiracy to hack a honeypot can violate the CFAA. There’s really no end to the sorts of things that can possibly violate the CFAA. So you’re looking at a situation where the Computer Fraud and Abuse Act almost acts as an ex post facto law, where the Department of Justice is able to look at what you did after the fact. And if they don’t like it or if they don’t like you for whatever reason, you are likely to be on the wrong end of CFAA prosecution. There’s also civil cause of action provided by the Computer Fraud and Abuse Act, so the company that’s the target of this exploit can also pursue who has ever accessed the system without authorization.

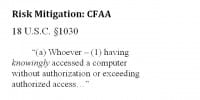

So, the question is whether there’s anything we can do to try to reduce our chances of being on the wrong end of this type of lawsuit. We don’t want to go too far in the statute, but let’s see some keywords we can obviously identify. Here we have: “Whoever – having knowingly accessed a computer without authorization”. Another part of the statute: “Whoever – intentionally accesses a computer without authorization”.

One of the things you can do is try to avoid unintentionally creating knowledge and intent. It’s a little bit hard to do this for yourself if you intend to do something, but at least you can avoid doing it in connection with other people.

For example, I always suggested you do not direct information about how to use some kind of technique to someone that you suspect or have reason to know is likely to use it illegally.

Be careful in providing technical support for some clever new technique that you developed. So, if I were your lawyer I would advise you not to answer that tweet if someone’s tweeting to you asking you about how to make something more effective perhaps.

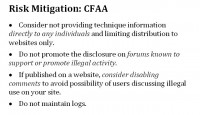

This slide (see left-hand image) is a little more detailed, some more approaches that you might take. Do not provide information directly to individuals, especially if you’re not sure who they are or what they might be up to; consider posting things on a website only. Do not post information to forums that are generally known to promote illegal activity. If you publish it on your own website or you have control of the post, consider disabling comments so you don’t have a situation of people discussing potentially illegal uses of your technique. And lastly, don’t maintain logs. So, that’s enough for the Computer Fraud and Abuse Act for now; there’s not a whole lot you can really do about it beyond just being careful.

Let’s move on to temporary restraining order (TRO). This is particularly timely, actually, because you may have read the story about the VW Group and the Megamos encryption that was used on the vehicle immobilizers. So, some European security researchers had discovered a flaw in the encryption that was used on the vehicle immobilizers that were used on VW Group cars, like Porsche, Audi, and Bentley, and they were going to present this at the USENIX conference in Washington, D.C. in a few weeks, and they got themselves flapped with a temporary restraining order preventing them from presenting their findings at the conference.

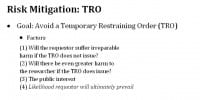

How did it happen and how do we prevent this from happening again? We’ve seen this here at Defcon and at Black Hat, where talks have been stopped by a temporary restraining order. So, take a quick look at the factors (see right-hand image above) that the courts look at when deciding whether or not to grant a TRO to prevent a researcher from disclosing some information about a vulnerability.

#1: will the requestor – in that case it would be the VW Group – suffer irreparable harm if the TRO does not issue? Pretty easy to imagine you’ve got an embedded system and someone has figured out how to break it. It’s going to be almost impossible for them to update it within a reasonable amount of time, and it’s usually expensive. Basically, “irreparable harm” means that money isn’t going to fix it very easily. So, that goes in VW Group’s favor.

#2: will there be an even greater harm to the researcher if the TRO does issue? Your paper got delayed – hard to see that as a huge harm to the researcher. We might feel really bad about that, but in terms of the sums of money the VW Group is going to have to pay to fix this, it’s not really put too good for the researcher there.

#3: the public interest. This is kind of a fun one because we might think that while the public interest clearly favors disclosing the vulnerability so that it can be fixed, the court is probably going to go the other way on that and see that all risk to VW’s Porsches and Betleys being stolen is much more in the public interest than having your really obscure crypto talk go forward.

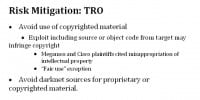

The last factor is the likelihood a requestor will ultimately prevail. This is really the one we need to focus on because the VW Group has to have a cause of action. They came to see we don’t like it. They have to say: “Here’s why you need to stop: it’s because you did something bad to us.” And in the case of the VW Group, in the Megamos case, and also in the case of the Cisco disclosure, what we had was the use of copyrighted material. So, the obvious advice then is to avoid the use of copyrighted material, so if you include source code or object code from whatever it is that you are working on, that gives leverage to whoever it is that wants to stop you from disclosing it.

There is a “fair use” exception if you use little bits and pieces of code, but you can’t just say: “Well, this is going to be fair use.” It matters how much you use and other factors that are very specific what is actually going on in your case. Just try to avoid it if you can. It may not be possible, but to the extent you can – do that. Also, avoid darknet sources for wherever you’re getting this stuff. In the Megamos case the court actually talked about the fact that the researchers obtained some information about how the Megamos system worked through some sketchy channels. I don’t recall exactly where they got it, but it was some sort of BitTorrent P2P type thing, it wasn’t from VW Group or Megamos.

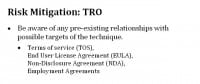

Another thing you want to do is be aware of pre-existing contractual relationships that you as a security researcher might have with the target of whatever it is you’re working on. These contractual agreements could come in the form of Terms of Service, End User License Agreement, Non-Disclosure Agreement, or Employment Agreement. An End User License Agreement might very well have provisions in it regarding reverse-engineering software, for example, and that’s something you might be doing as part of your exploration, and that could give leverage to someone to try to stop you. Pretty much every piece of software you get is going to have some kind of license agreement. You came to it legitimately, right? You’ve agreed to this license that may prohibit you from doing certain things with that software. And there’s not a whole lot you can do about that, but you at least can be aware of the risk, if nothing else.

How far you need to go in trying to mitigate the risk somewhat depends on the techniques that you’ve used in your research. If you’ve done things that clearly look like some of the examples of what people have done that’s got them prison time, that’s something you need to be careful about or take more aggressive mitigation techniques to perhaps hide some of the information about what you’re doing. So, for example, if in the Megamos case no one had identified that it was the VW Group, whether this system had been compromised, VW Group would not have been able to go after a temporary restraining order against the researchers. So, perhaps there’s an opportunity here for the conference-going community to create a track where people could present things that we recognize have to be kept quiet, sort of like a confidential disclosure: “This is going to be really cool, but we just can’t really tell you about what it is because then you won’t get to hear it.” So, maybe that’s one approach.

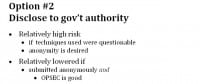

Now I’d like to talk about some of the ways that you might make a disclosure that are relatively less likely to get you in trouble. You can disclose to the responsible party. That’s sort of what the responsible disclosure paradigm is all about: you found a problem with a system – you tell who’s running this system. This is actually, unfortunately, relatively high risk, and that risk scales with the questionableness of whatever technique it was that you used to find out about this vulnerability.

So, if you are connected to the Internet, you access a remote system – “You didn’t have permission? That’s how you did it?!” It may not be a great idea to go tell them about it, because if they don’t like it they’ve got an action against you. If you’re inconvenient, that’s a problem for you. You might think you’re doing them a favor, they might not agree that you’re doing them a favor. If you’re able to submit it anonymously to whoever the vendor is or the responsible party, that’s great. Depends on how good your OPSEC is, I suppose. A lot of times you think you’re maybe anonymous but you’re not as anonymous as you thought you were or hoped you were. So, that’s a risk in itself that you need to consider. You can submit it to Bug Bounty, maybe there you’re at less risk.

You can disclose to a government authority perhaps. Maybe you never believe it will ever get to the vendor, but, again, if your techniques are perhaps questionable you might not necessarily want to be submitting it to a government authority separately, you may have an interest in keeping your identity anonymous. Again, you can try to submit it anonymously to the government, but I don’t know how much we can really trust that anymore.

You know, this is a somewhat legal talk and you can almost never get to a legal talk where someone will actually tell you something for sure, like “Absolutely, 100% you will not get in trouble if you do this.” But fortunately we are in a case here where there is one group of people who really don’t have to worry about getting in trouble with the Computer Fraud and Abuse Act when they disclose a vulnerability, and here they are (see left-hand image). OK to disclose if you’re one of these people.

We’re thinking about ways that we might be able to leverage opportunities for security researchers to make disclosures while keeping the risk as low as possible. So, we’re working on creating a pilot program, where attorney-client privilege can be leveraged to hide the identity and the techniques used by a security researcher in making a disclosure. The concept works like this: the researcher would disclose a vulnerability to a trusted third party, which would be an attorney. Only to the attorney. It’s critical that this be a completely confidential disclosure to maintain the confidentiality of that disclosure so that no one on the outside can get to it. The trusted third party does not publish the vulnerability on behalf of the researcher, however the trusted third party does disclose the vulnerability to whoever the affected party is, whoever has this vulnerability. The researcher remains anonymous throughout the entire process.

This is possibly of use if there’s no better option. It’s a little bit cumbersome and there are some side effects, chiefly that the researcher remains anonymous and doesn’t get public credit for whatever the research was. But it is one possible way for the researcher to be able to disclose and remain about as anonymous as one can possibly get. So, this is a pilot program; we’re currently working on it, kicking out the bugs right now. If anyone is interested in talking to us further about this, we’ll definitely welcome your input, and please see me afterwards.

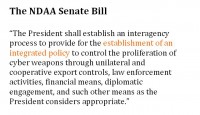

We should now turn to selling very quickly. Right now there is no law in the U.S. that prohibits the selling of an exploit, and that is a situation that is probably likely to change in not too distant future. But for now, there’s not really much to worry about unless the techniques in developing your exploit – going back a few slides – have some problem, then you still have a problem. But the fact of the sale itself is not something that’s going to get you in trouble. However, there’s a lot of focus on this market now. Here are some recent articles (see right-hand image) from May 2013: “Booming “zero-day” trade has Washington cyber experts worried” and my favorite “The U.S. Senate Wants to Control Malware Like It’s a Missile”. Well, this stuff is dangerous!

Every year Congress has to pass the National Defense Authorization Act, it sets the budget for DoD and includes a bunch of other stuff that gets stuck in there. This year, for 2014 the Senate version hasn’t been passed yet, it’s still in Congress. The Senate version has provisions that seek to begin the process of regulating the sale of exploits. The House version doesn’t have this, it’s still just in the Senate, but I think this is where it’s headed. The Bill says that the President shall establish a process for developing a policy to control the proliferation of cyber weapons through a whole series of possible actions: export controls, law enforcement, financial means, diplomatic engagement, and so on.

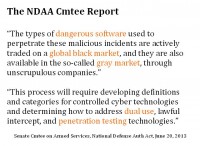

The Senate Armed Services committee that had the Bill before it was passed to the rest of the Senate had some commentary on this (see right-hand image). They referred to the dangerous software, a global black market, gray market – it starts to look really bad. But they note that we need to have a carve-out for dual-use software and pentesting tools.

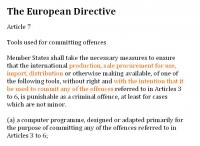

In Europe, the European Parliament recently passed a directive (see left-hand image); they’re a little bit ahead of us. This prohibition on a sale of tools, as they call it – basically, exploits – will be required to be enacted by all the member states in short order. This provision prohibits the production, sale, procurement for use, import, distribution of these tools that could be used to commit these enumerated offenses, which is pretty much all the bad things you can think of doing with a computer.

However, there’s an exception (see right-hand image). It’s a very important exception – for tools that are created for legitimate purposes, such as to test the reliability of systems; and it further notes that in order to violate this law you need to show a direct intent that the tools be used to commit some of the offenses. In both cases, both in the U.S. and in Europe, we’re seeing this trend that’s really going back to the definitional problem of how we define what an exploit is and how we make sure that legitimate tools can still be bought and sold.

So, this is kind of prospective. We don’t know what the laws are actually going to look like, but I would start thinking like this: think about dual-use tools. I mean, if you write something, don’t put it together as the next greatest hack. You are creating pentesting tools. If you look at software – I’m sure many of you have used Copy II Plus which is backup software – and the manuals for this software have very elaborate disclaimers that it’s strictly being used to back up your floppy, this is not being used to make illegal copies. And that’s where exploits will go. Some exploits will never be able to be looked at as a dual-use tool for sure. I mean, for instance, if you have a nuclear missile equivalent of an exploit, it’s hard to justify the pentesting value of that. But a lot of tools will fall into this area, and that’s where perhaps they should go.

Some other things you might do if you are selling. Know your buyer, to the extent you can. What may happen is someone in the U.S. is going to sell an exploit, is going to go to some channel, and it’s going to come back and get used against some U.S. interest. We might not hear about it because it may be a matter of secret, but this will happen and then there will be a huge drive to stop this from happening very quickly. That’s the same reason, you know, if someone is murdered with a certain type of weapon, that weapon has to be banned – that’s going to happen here. The way laws are created in this country is very reactionary, and I expect that trend to continue here. If you are selling something, don’t sell it through a channel where it will go to some country that’s under an embargo with the United States. Maybe your best bet is just to sell it to the U.S…

Ask for insurances from your buyer so you don’t have knowledge that is going to some place where it’s not supposed go to. You could be lied to, but you can’t control everything, right? But at least you can get an assurance that it’s not going to be used in some illegitimate way.

And also, you can always use disclaimer language. I have some nice examples here (see left-hand image). This huge text in the top is actually from a software product many of you have probably used many times; it’s good stuff. I’ve highlighted probably the best of the operative language in it. But if you are selling something, be sure to use some disclaimer language that kind of flows along these lines, that would help you from being charged of being complicit in any sort of illegal use to which the software might eventually be put.

Lastly, I’d just like to highlight this bottom little paragraph which is actually from the Apple iTunes store, it’s the End User License Agreement that comes with that. It requires that you agree that “…you will not use these products for any purposes prohibited by United States law, including, without limitation, the development, design, manufacture or production of nuclear missiles, or chemical or biological weapons.” God, that’s some dangerous stuff.

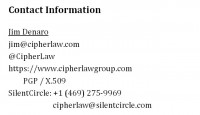

So, thank you! This (see right-hand image) is my contact log. I think we have some time here for questions.

Question: What about using a corporation to limit your liability for disclosure or selling?

Answer: Corporations can be held liable in many cases.

Question: I’d have a question regarding full disclosure vs. responsible disclosure. So, when we do it we do it via responsible disclosure, we contact the vendor. In most cases the vendors get a hotfix within about a week. Sometimes vendors will say: “We need more time,” but sometimes they’re going to say: “We’re not going to fix it and can’t publish it.” Google recently published the fact that they plan to disclose vulnerabilities within 7 days, have a 7-day turnaround. So, what happens if a company like Google intends to publish a vulnerability within the 7-day turnaround period, and the other company says to Google: “Don’t! If you do, we’ll sue you”?

Answer: Well, if Google has some kind of obligation not to – this would depend on the specific circumstances of it – but in this case, if no law has been broken, then Google could publish that without any problems.

Question: In my case, I contact a vendor and tell them I’ve got 10 vulnerabilities which I intend to publish, and they come back to me and say: “If you publish those, we’ll sue you.” And the same thing happens when Google says: “We’re not going to give 30 days; we’ll give you 7 days,” and the company comes back and says: “Google, we’re going to sue you if you publish.” It doesn’t carry the same weight whether they’re trying to sue Google and they’re trying to sue me, for example.

Answer: That’s the unfortunate part.