PaulDotCom’s Paul Asadoorian and John Strand present an intriguing research at RSA Conference 2012 about ways to confuse, upset and geolocate cyber intruders.

Paul Asadoorian: Hello everyone and welcome to Offensive Countermeasures – Making Attackers’ Lives Miserable. My name is Paul Asadoorian, I’m from PaulDotCom, and this is John Strand, also from PaulDotCom and the SANS Institute.

So, just a little brief information about the background of this talk. John and I do a lot of penetration testing: companies hire us to test the security of their organizations. We found that over time we were amazingly successful, and it wasn’t because we’re the best penetration testers in the world necessarily, but because the defensive measures we found in place were inadequate for the measures we were using to break into those organizations.

So, one day we sat back and thought: “What can we do better? What better recommendations can we make to our customers? What tools can we arm people with to better defend their networks?” Hence this talk was born, which talks about the three different areas we’re going to talk about, what we call offensive countermeasures. And that’s taking a little bit of a splash of offense, and applying it to defense. Some people will call this hacking back, and when I say that phrase, people usually look at me with shock and horror: it’s ok, don’t panic, we’re going to go over all of the different aspects of it. So, John, if you want to maybe introduce some of the concepts on this slide, including annoyance, attribution and attack.

John Strand: Great, absolutely. So, when we were working through offensive countermeasures, as Paul mentioned, we started with the definition of insanity for Albert Einstein. Definition of insanity is doing the exact same thing over and over again, expecting different results. And if you look at computer security, the vast majority of what we’ve been doing and selling: antivirus, IDS, IPS – it’s doing the same thing over and over again. So when we tried to come up with new approaches for trying to make an attacker’s life miserable, we broke it into three sections:

Annoyance. What can you do to just make an attacker incredibly annoyed with your network security architecture? What can we do for attribution to actually identify who it is that’s attacking our network? And also, what can we do for attack? Now, before people start freaking out yet again with the idea of hacking back, a lot of what we’re going to talk about are going to be things that are easy to implement and we don’t have to worry too much about legalities; but there are going to be some legalities that we are going to talk about a little bit later, surrounding offensive countermeasures.

So, with the legalities: pretend for a second that I’m a lawyer. Anything that you do in this area for offensive countermeasures, we strongly recommend that you discuss, document and plan it. One of the things that is interesting about this area is: there’s not a lot of research, there’s not a lot of case law, so if something does go wrong and the attorneys do get involved and judges get involved, if they saw that you did due diligence to actually document it, came up with a plan and have reasoning – it looks a lot better than trying to shred the documents in the process of doing it. So, trying to hide your intentions basically says that what you’re doing you know is in fact wrong, and the court will take it as such. Rule of thumb is: don’t be evil. We’re going to talk more about what being evil to an attacker would actually be like.

Paul Asadoorian: So, annoyance. Some people will say: “Why would you want to annoy an attacker?” And the best reason that I give them is that when you do things like annoying an attacker, it tends to slow them down, it tends to let them give up some information about themselves, which leads you to be able to detect their presence inside of your network. So, putting up a little script that maybe causes their tools to do something funky – that may be an event that you can track and may lead to you discovering that attack just by putting some things inside of your network that are meant to be an annoyance to your attacker.

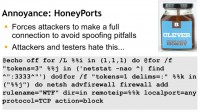

John Strand: So, here’s one of those annoyances, one little script that we came up with (see image). We did the same idea on Windows as we did for Linux as well. Once again, the idea is to try and get the attacker to slow down, get the attacker to make more moves so you can detect them.

So, this little script, which is distributed with our slides here at RSA, you can set up a little listener on port 3333. If you look at the slides, you’re going to see 3333. We have Netcat listening on that port, you can have anything listening on that port. If anybody makes a full established connection to that port, and this is why it’s so important, a full established connection: the attacker sends a SYN, you send back a SYN-ACK, they send back an ACK – that is established. As soon as that established session is created, it’s going to add a firewall rule.

As you can see, it says: “do netsh advfirewall firewall add”, we give it a name – Whiskey Tango Foxtrot; direction in, remote IP address is a variable, the IP address that’s connecting in; local port – any; protocol TCP – block.

Now, the reason why this is critical, some people would say: “You can’t do this, because all the attacker has to do is spoof their source address.” This will not work, it’s very easy to spoof source addresses for things like SYN packets; but it’s incredibly difficult to spoof an entire established connection; although not impossible. It is very difficult to do.

Paul Asadoorian: One thing along with the HoneyPort: make sure the port that you choose is a port that you know should never ever be used in your environment, so monitor your environment, know which applications are happening, and for the most part choose a port that you know: if you see activity on this port, it’s because of your HoneyPort and because of nothing else.

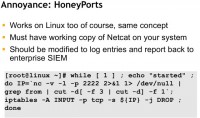

John Strand: Absolutely. And this one is one that we have for Linux (see image). It does the exact same thing we covered on a Windows slide previous, but this one’s listening on port 2222. We actually had one of our customers implement this; they had a thousand Linux servers. Pentesting company showed up, ran a port scan, the initial SYN scan saw servers with all kinds of ports open. As soon as they did more invasive testing, like a vulnerability assessment scanner or doing full-connect scans with Nmap, the entire network went dark. Now, when you’re pentesting and the entire network just disappears, you tend to get a little bit nervous that you may have crashed the entire network. It took the pentesting company about half a day to figure out exactly what was going on.

John Strand: Infinitely recursive directories are another one of the areas that you can mess with attackers’ lives. So, what we’re going to do is we’re going to show you how you can create a directory that’s referencing itself. If an attacker is going through recursively searching a file system on one of your computer systems, looking for your sensitive documentations, their tool is probably going to get kind of bogged down in this particular directory. We got a lot of help from another member of PaulDotCom Security Weekly, Mark Baggett. We couldn’t find any pictures of Mark Baggett, so it just turns out that he looks a lot like David Hasselhoff, so we just dropped it there (see image). He’s a very handsome man…

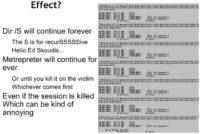

Now, how to do this? In Windows, what we do first is we create a directory (see image). And as you can see in the slides, we created a directory Goaway; we changed directories into that directory, we do ‘make link /D dir1’, and it’s linking to the Goaway directory, which is the directory that you’re in. Then we do the exact same thing a second time with dir2. We created two directories that are linking back to the Goaway directory. I’ll show you why that’s important on the next slide.

Whenever you go through and you start doing a recursive lookup using something like ‘dir /S’ – as Ed Skoudis likes to say, the S is for recurSSSSSive – as soon as it gets to, like, 128 characters, it says that the directory or filename is too long. So, if you have two directories though, as soon as it hits that 128 character limit, it alternates to the second directory, hits the 128 character limit, goes back to the first directory, and it alternates back and forth (see image).

So, if somebody was attacking your network, let’s say with Metasploit, and they are using the Meterpreter to try to identify directories with sensitive documents, Meterpreter would go through and recursively look through the file system; it would hit this particular directory and cease; it would just continue to go forever and ever. Your CPU would jump, like, 60-70%, which would serve 2 purposes: first, it shuts down the bad guy’s backdoor – believe it or not, the bad guy kills the session, the recursive search continues to run; and the second added bonus is it’s very easy for you to identify the malware.

Paul Asadoorian: Now we’ll move on with annoyance. In this part it’s about setting traps. This was a really great concept that I was happy to apply to computer security, because I always thought that people thought of honeypots as these vulnerable systems that have all these vulnerabilities on purpose, that you’re putting out on the network and letting people hack into them.

And we kind of thought of honeypots as something more like a trap, where it’s almost expected behavior that’s not necessarily a vulnerability, but will draw the attacker in to be able to do stuff, like, for example, when they’re spidering your website.

This is very close to recursively searching a directory on a file system, except now, when the attacker goes to your website and starts looking for different directories, in which maybe more web applications exist, or maybe looking for places where files are going to list out inside of your website, what you do is you trap them with something called SpiderTrap and WebLabyrinth.

SpiderTrap was the original Python script that someone who worked for us wrote, that basically just listens as a web server; when you make a connection to it, it presents a web page; and the web page links to a bunch of different directories. When you click one of those links, whether with your automated tool or with your web browser, it takes you to yet another page, which then displays yet another set of random links, and clicking one of those random links then displays another page, so you can see the tools will get stuck in this trap.

Ben Jackson converted this into PHP and added all kinds of neat functionality, so you can tell the Google bots not to get stuck inside of your trap, so Google can still index your site.

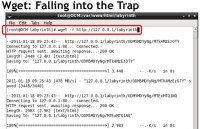

When you run something like Wget against it, it will actually fall into the trap (see image). When you recursively go to a site and start following all those links, doing that dynamic search, what we call spidering, cool tools will fall into the trap. And we’ve actually caught some attackers using highly specialized tools looking for specific vulnerabilities; we’ve caught them inside of the traps, hopefully slowing them down so that they’re not finding anything on our website and slowing them down from attacking other people’s websites.

w3af is another tool which gets fooled by this trap, and it’s kind of funny: the progress bar in the w3af goes about ¾ of the way through, and it says: “Yep, I’m doing good, I’m spidering your website for you.” Then it kind of backs up, then it kind of goes forward, and it just sits there forever trying to recursively search your web directories.

Paul Asadoorian: Now along to attribution. So, if we can annoy attackers and draw them into certain places inside of our website or inside our network, let’s learn some information about them. What kind of tools are they using? What kind of web browsers are they using? Where are they specifically?

A lot of these techniques have a lot of repercussions for a lot of organizations, such as law enforcement. I mean, knowing where an attacker is at is huge these days, especially with technologies such as TOR, open proxies on the Internet – there’s lots of ways for an attacker to hide, so why not find out? Maybe the same attacker, you can correlate from different areas. You can say: “Hey, there were these 16 different IP addresses attacking us, but guess what – we did the attribution that we’re going to talk about in this section, and it is in fact the same attacker.”

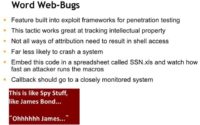

John Strand: The first one we’re going to talk about is Word web-bugs (see image). This is something that originally I found; it was actually implemented by good friends of ours. They actually embedded an HTML inside a Word doc, and then the Word doc will beacon back home; I’ll show that on the next slide.

But you don’t need Core Impact or any expensive tool to do that, you can do this on your own. Microsoft Word can read files that have HTML. If you put in an HTML and you put in an iFrame or a reference to a cascading style sheet, then, as soon as Word starts, it’s going to try and load that resource. And when it does, it’s going to create a quick beacon back, and you can capture some information about where your documents are.

We have customers that have very, very sensitive files and they want to make sure they can track them wherever they are (see image). So, we put in a little HTML; they create their documents; they use them however they normally would, but as soon as they open it, it’s going to create a beacon. Then we can take the IP addresses that are connecting back, and we can geolocate where that document is. If your company is in Ohio, and all of a sudden your document is being opened in Kuala Lumpur, you might have something to research. This is very effective, and, by the way, this is completely independent of whether or not you have VBScript.

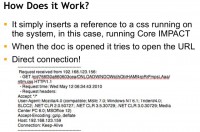

The next one is the Decloak project. This is something that was started by folks at Metasploit, and we actually have a couple of additional modules that we’ve added to it. But the idea is if an attacker is attacking you and they’re attacking you through a TOR network and they’re trying to obfuscate where their real IP address is, there are ways that we can get them to connect to us with other applications.

If you look at the slide (see image), you can see the TOR when it logs the information; it’ll say that we have a connection from Java, we have a connection from HTTP, we have a connection from UDP. How this works is when you go to a website, it will spawn off Microsoft Word, it will spawn off iTunes, it will spawn off a Java app, it will spawn off a Flash application. And the reason why that works is because many of these applications, when you’re using TOR with your browser, may not be going through the TOR connection. As soon as they’re invoked, they’re not going to go through the TCP connect function, and they’re going to make a direct connection back to you. So that’s very effective when we’re talking about how to attribute who is attacking you. Speaking of attack, Paul…

Paul Asadoorian: So, the attack section is the one that I think gets people most nervous – when we say we’re going to actually attack the attackers. And we don’t mean to attack them in that we’re going to log into their systems and delete their hard drives – not all the time, anyway. But we’re going to have attackers come to places on our website or inside of our network; then they’re going to visit specific places which we are drawing attackers to; and the attackers now are going to launch code that we provide them.

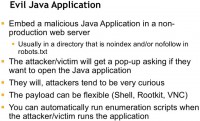

And that’s what we really mean by attack – they are running code of our choice. A lot of this presents itself to management applications, so we set up fake management applications that say: “Hey, attackers, come manage this switch that’s wide open to the network or the intranet that’s on my network, and, by the way, you need a Java payload in order to manage this switch.” That Java payload, essentially, could give us things like shell, it could delete their hard drives, or it could provide us with more attribution (see image).

John Strand: Probably the most well-known Java application – for penetration testers, anyway – is the signed Java applet attack in Metasploit, and also implemented in the Social Engineering Toolkit by Dave Kennedy.

You can actually set that up at a part of your website, mentioning in robots.txt ‘disallow’ or ‘nofollow’ to the admin directory, and as soon as they get there, exactly as Paul said, it says: “You need to install this Java app.” Now, if they believe – attackers, that is – that they need to install this Java app trying to manage your firewall, you’d better believe that they’re going to do it. We do recommend having warning banners in place clearly identifying to the bad guys that by coming to this page you’re subjecting to the reasonable terms of us checking your computer out. It should say: don’t be evil.

So, the evil Java application: as we said, we can put a reference in robots.txt. The bad guy goes right to that directory, hopefully a warning banner pops up that’s been approved by your legal department, and as soon as they run the Java code, then it’s code of your choosing. At that point you have the capability of getting shell: you can do a rootkit; VNC level access, if you have, of course, authorization from various law enforcement agencies; or it could be something as simple as what are the users on the system. What is the IP address of the system? It does traceroute: what is the hostname of the computer system? What are the wireless access points that are nearby? There’s a custom Java app that we developed that allows us to actually identify geolocation, latitude and longitude simply by triggering the applet.

Now, here’s an example, once again, from the Social Engineering Toolkit (see left-hand image). You still get a pop-up in this situation, as you would get in penetration testing – the idea is about using some of the techniques that pentesters use, but using them in such a fashion that we can defend and attribute and retrieve some additional information about the attackers. Everyone clicks Run, and for about $160 you can make the little pop-up go away by having it digitally signed. It’s not pretty, but it works.

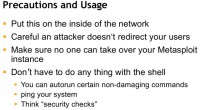

So, precautions and usage: please, please, please make sure that a lot of the stuff you set up is something you set up on the inside of your network. You want to make sure that the attacker doesn’t redirect your users to your own traps. Make sure no one can ever take over your Metasploit server; and also, you don’t have to do everything with your servers: you can autorun certain non-damaging commands; you can ping your system; you can do security checks. And the important thing is that if it ever goes to court, you can say: “We never intended to get fully maintained access on their system; we just validated the security configurations of their computer; and, by the way, they agreed to that in the warning banner.”

Paul Asadoorian: So, just as a conclusion: you can watch our shows where we talk about these techniques and more; you can listen to our podcast which is in iTunes by searching for PaulDotCom, and you can watch our live production or recorded videos by going to our website pauldotcom.com/live to watch us live, or on YouTube to watch our prerecorded videos.

John Strand: We are pretty much everywhere. Thank you very much for your time!