Participating in the USENIX Security Symposium, software engineer and security researcher Marti Motoyama presents an in-depth study of automated and human-based CAPTCHA-solving services on the market.

Participating in the USENIX Security Symposium, software engineer and security researcher Marti Motoyama presents an in-depth study of automated and human-based CAPTCHA-solving services on the market.

Good afternoon, Ladies and Gentlemen. My name is Marti Motoyama. The title of my talk is Understanding CAPTCHA-Solving Services in an Economic Context. This is the work I did with my co-authors, me and my advisors over at University of California, San Diego.

A number of Internet services today are offered for free with the hope that the advertising revenue generated by the sites will produce a profit. But the key assumption behind the business models employed by these sites is that human eyes are viewing those advertisements.

Unfortunately, the free services offered by these sites can be monetized using unscrupulous means (see left-hand image). For example, web-based email accounts can be used for spamming, social engineering attacks can be mounted via identity hijacking. The attackers had even used cloud services for botnet CnC (Command and Control).

In order to make these attacks profitable, the attackers must abuse these services at scale. The Spamalytics study in 2008 observed that over 100,000 spam emails must be sent before achieving even $1 in revenue. And to achieve this scale the attackers will generally rely on automation.

As a response to automation, web service providers will typically employ CAPTCHAs. The goal of our work is to evaluate CAPTCHAs as a security mechanism by looking at the CAPTCHA-solving ecosystem. Our approach is to explore a range of data sources; we briefly look at CAPTCHA solvers and evaluate their role in the solving ecosystem.

Next, we characterized third-party human solving services from the perspectives of a customer and as a solver employee. In our role as a customer, we purchased CAPTCHA solves from the human solver services, and as a solver employee we solved CAPTCHA for several services, including our study. Most of our work is concentrated here, as this solving methodology seems to be taking hold as the preferred means of bypassing CAPTCHAs.

Lastly, we also conducted an interview with a human-based solving service site operator, who I’ll refer to as Mr. “E” in the remainder of this talk. Mr. “E” was not a core component of this study, but we used a lot of his feedback to confirm our findings.

So, first let me introduce CAPTCHAs. If you have any sort of web presence, you probably solved a CAPTCHA at some point in your life, and shown here are just various examples of them (see right-hand image).

CAPTCHAs have several properties that make them useful to web service providers: they can easily be solved by humans, meaning that you’re not going to scare away your legitimate users; they can easily be generated and evaluated; and lastly, they cannot be easily solved by a program, which would, presumably, prevent automated attacks against these websites.

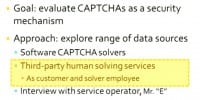

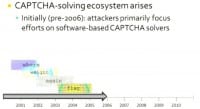

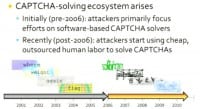

CAPTCHAs were conceived in roughly 2000, but attackers are not simply going to go away just because you’ve erected this barrier in the form of a CAPTCHA. As an initial response to CAPTCHAs, people wishing to abuse these services would typically turn to software-based CAPTCHA solvers. Because the texts on the CAPTCHAs were not greatly obfuscated, the attackers could use various vision techniques to identify the text present on the CAPTCHAs (see left-hand image). This approach seemed to be among the few used up until around 2006, roughly.

We looked at several software solvers during the course of our study – more details can be found in the paper. I’ll briefly talk about our experiences with XRumer. XRumer is a market leading forum spammer. In order to post at forums, guest books and bulletin boards, the solver often needs to create an account, thereby necessitating a CAPTCHA solve. Thus the authors of this tool have built in significant CAPTCHA solving abilities. Shown here (see right-hand image) are lots of examples of CAPTCHAs that the tool claims it can break.

We purchased a legitimate copy of this tool for $540 along with several popular forum software products. We tested different versions of the forum software against XRumer. We discovered that in fact XRumer was capable of solving the default CAPTCHAs in contemporaneous versions of the most widely used forum software products.

In response, however, the new forum software versions have modified their default CAPTCHAs to not be solvable by XRumer. With version 5.0.9, which was released in August of 2009, XRumer moved to support third-party human solving services, and in particular, Antigate and Captchabot, both of which we study in our particular paper.

What did the authors of XRumer seemingly throw off their hands? To start with, I’d like to begin with an analogy to traditional security. In traditional security the bad guys obfuscate, the good guys recognize. This dichotomy exists between virus software and AV vendors, spammers and email providers. What can be seen is that recognition is inherently the harder task.

Contrast is the CAPTCHAs, where the roles are reversed, and the good guys have the easier task of producing harder CAPTCHAs, while the bad guys must use advanced vision techniques to decipher text on a new CAPTCHA (see right-hand image). And today, as far as we know, there doesn’t seem to be a general CAPTCHA solver on the market.

Software CAPTCHA Solvers

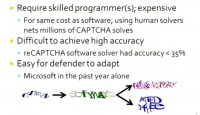

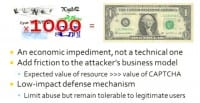

Let’s delve down further into the challenges facing software solvers. First of all, they require skilled programming labor, and hence can be expensive to develop and/or purchase. Specialized solvers cost on the order of hundreds or thousands of dollars, and for a thousand dollars one can purchase a million CAPTCHA solves done by humans.

It’s difficult for software to achieve very high accuracy. We looked at reCAPTCHA software in our paper, and we observed that it had accuracy below 35%. And if you keep failing at a CAPTCHA, that provides evidence to the web service provider that you may be using an automated solver. These CAPTCHAs typically have very short life spans. Microsoft, for example, has aggressively changed their CAPTCHA in the last year alone. And there’re various examples of the CAPTCHAs they’ve changed just in the past year.

In talking with Mr. “E”, he also expressed that he didn’t feel it was worth it to purchase or develop software solvers: the solvers don’t last, and hence are just not economically worthwhile to pursue. In his own words, it’s just a big waste of time to invest in this particular approach. And this is especially true as CAPTCHAs become increasingly more complex. Thus, as of roughly late 2006, attackers began using aggregated cheap human labor to solve CAPTCHAs (see left-hand image).

For the sake of illustrating how attackers typically use human solvers, let’s suppose an attack was being mounted against Twitter. After the individual or the bot program extracts the CAPTCHA, they send it to one of these human-based solving services. In this example we’ll assume that the attacker is using Decaptcher, a website that aggregates customer request for CAPTCHA solves (see right-hand image).

Decaptcher has a corresponding worker backend called PixProfit, a website which aggregates workers from low-cost labor markets to actually perform the CAPTCHA solves. PixProfit is just going to display that CAPTCHA to some worker in the world; that worker is going to type in the text, and then that solution is going to make its way back to the submitter. Using that solution, the bot can then ascertain an account (left-hand image).

Third-Party Human-Based Solvers

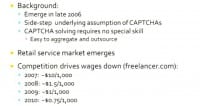

Now I’m going to migrate into the meat of the talk, the human-based CAPTCHA solvers. People first began selling human CAPTCHA solving services in roughly 2006. In essence, these services sidestep the underlying assumption of a CAPTCHA that a program alone is attempting to abuse the service and that the individual who’s typing in the alphanumeric characters is actually the person who’s trying to access the resource.

The fact that CAPTCHAs need to be easily solvable makes it incredibly easy to aggregate and outsource this task. Many enterprising individuals have taken note of this: businesses selling CAPTCHA solving services are spreading up all over the web, driving prices down and correspondingly the wages paid to solvers. Shown here (see left-hand image) are example wages paid to workers who solve CAPTCHAs, and that’s in units of CAPTCHA solves.

So, the goal of our work is to develop a clear picture of what human-based CAPTCHA solvers are capable of doing. Really, we wanted to know the answer to the question: just how good are these human-based CAPTCHA solvers? We looked at several metrics: price, availability, response time, accuracy, and capacity. We won’t look at CAPTCHA difficulty in this talk, and for an in-depth analysis of CAPTCHA difficulty, I advise you guys to please see the Stanford paper that was presented open in 2010.

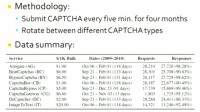

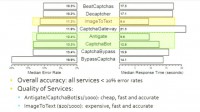

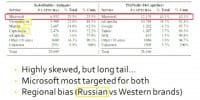

If the services we look at provide all of the above, then this, really, suggests that CAPTCHAs cannot necessarily prevent wide-scale abuse. In our study we looked at 8 services (see left-hand image) that spanned a number of different price points. The cheapest services were Russian-based and charged $1 for every 1000 CAPTCHA solves. The most expensive was ImageToText, which had a bulk price of $20 per 1000 CAPTCHAs solved. And there’s a variety of reasons why these prices all differ, some of which I’ll get to later in this talk.

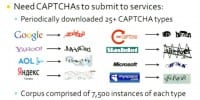

To characterize these services, however, we needed a corpus of CAPTCHAs to submit. What we did is we periodically downloaded over 25 different CAPTCHAs from such sites as Google, reCAPTCHA, Microsoft, and Yahoo. In the end, we had about 7500 images per site in our corpus (see right-hand image).

We just went ahead and signed up as a customer on each of those 8 human solver services, and then we submitted a CAPTCHA every 5 minutes over the course of 4 months. We rotated among the various CAPTCHA types between each CAPTCHA submission, meaning that at minute 0 we submitted a Microsoft CAPTCHA, and at minute 5 we submitted a Google CAPTCHA.

Service Availability

The services in general had a fairly high availability, meaning that the service provided an answer to a CAPTCHA that we submitted. And in particular, BypassCaptcha and Antigate had close to 100% availability. During this time period two of the services we studied actually went offline. We suspect that this was due to increasing competition between services. So, the end takeaway from this slide (see image above) is that services have a fairly high availability: over 80% of the time they were able to process our requests across all services. They’re also very competitively priced.

Response Time

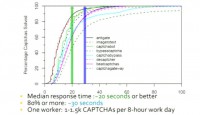

The next metric we looked at was response time: we wanted to learn how long the services take to return responses, and then using that information we can estimate how many CAPTCHAs one worker can solve on a daily basis. Shown here is the CDF of the response time from our study (see left-hand image). We see that the median response time between the 8 services is 20 seconds or better. For most of these services over 80% of the responses are returned within 30 seconds. Using these numbers, we can estimate that one worker can roughly solve between 1000 and 1500 CAPTCHAs in an 8-hour workday, assuming they work 8 hours.

Accuracy and Quality of CAPTCHA Solves

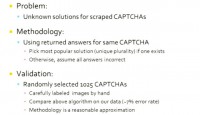

But the answers to the CAPTCHAs are actually pretty useless unless they’re correct. However, we in fact do not know the correct answers for the CAPTCHAs that we submitted. Thus, to assess correctness, using the answers we received for the same CAPTCHA, we assumed that the correct answer is the most popular solution, or unique plurality, if one exists. Otherwise we can certainly assume that all the answers we got were wrong.

So, for example, if we got 3 answers back for one CAPTCHA and 2 of those agreed, then we assume that the agreed-upon solution is the correct answer. However, if we got 3 solutions back and they’re all different, we just assume all the answers are wrong. We validated our methodology by randomly selecting about 1025 CAPTCHAs. We labeled them by hand and compared our answers to the ones we got using our methodology. We had a roughly 7% error rate, and we suggest that our methodology is a reasonable approximation of the correct answer (see image).

Using this methodology, we can then begin to break down how accurate each of these services is. Also, we can see whether price affects the quality of the service, that is, if we pay more money, are we getting better responses?

Shown here (see left-hand image) are the median error rate and response times among the CAPTCHA types for each service. We can see that the services all had error rates below 20%. BypassCaptcha actually had the worst error rate at close to 20%, while the defunct service CaptchaGateway had the worst response time at about 21 seconds. Antigate and CaptchaBot were both cheap, fast and accurate, while the ImageToText was expensive, fast and accurate, which really seems to suggest that the quality of the services is not really dependent on the cost of that service. BeatCaptchas and Decaptcher have very suspiciously similar characteristics, and we have further evidence to suggest that BeatCaptchas, which costs roughly three times as much as Decaptcher, just resells Decaptcher solves.

Service Capacity

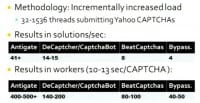

Next, we measured capacity by subjecting each of the services to a varying load of Yahoo captures. Each thread we started would submit a CAPTCHA, wait for a response, and then subsequently submit another CAPTCHA immediately right after. We assumed that a service is maxed out capacity-wise when we started to receive a large volume of error messages, which is what services typically do when they’re overloaded.

Using this methodology, we were unable to max out Antigate, which processed our requests at a rate of 41 solutions per second. If we extrapolate by assuming that CAPTCHAs take anywhere between 10 to 13 seconds to solve, then Antigate has somewhere between 400 and 500 workers. And the numbers shown here represent the capacity available to us at off-peak hours (see right-hand image).

And combined, as a conservative estimate, all services can solve over a million CAPTCHAs per day combined. And what’s scary as well is that this capacity can grow at a drop of a dime: Mr. “E” says if he gets a large volume of CAPTCHAs, he’ll just call more workers to jump online and start solving it for him.

So, what we have done so far is characterize the services, showing that they’re capable of solving a large volume of CAPTCHAs accurately and within a reasonable amount of time. Now we’ll start to take a look at the people involved in the solving.

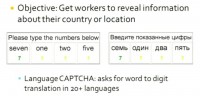

By looking at the labor demographics, we can better understand the cogs that operate within these CAPTCHA-solving machines; perhaps new CAPTCHAs can be developed that capitalize on this labor demographic knowledge. We developed two CAPTCHAs, I only have time to show you one. The CAPTCHA asked the user to translate the words zero through nine into their corresponding numeric values (see right-hand image).

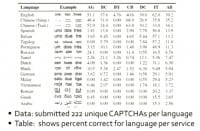

So, for example, we asked the user to convert the spelled out word “seven” to the number 7, and we did this for over 20 languages. We submitted 222 unique CAPTCHAs per language to each of the six services still operating when we conducted this experiment. So, AG corresponds to Antigate, PC – BeatCaptchas, BY – BypassCaptcha, CB – CaptchaBot, DC – Decaptcher, IT – ImageToText.

The table shown on the screen shows the percentage of correct responses we received for each language per service (see left-hand image). English and Chinese are well represented across most services; otherwise the services exhibit different affinities for different languages. For example, Antigate and CaptchaBot have a strong Russian presence, while BeatCaptchas has a strong Tamil and Portuguese speaking populations. And this is very logical, as the regions where they speak these languages correspond to low-cost labor markets.

We see this very oddness with ImageToText: they really have a remarkable ability to translate this CAPTCHA where all the other services really seem to fail. Not only that, ImageToText was even able to solve CAPTCHAs from the synthetic language that we incorporated into this experiment, Klingon. The main takeaway from this slide is that the low-cost labor markets – China, India, Russia, Eastern Europe – are well represented.

The last series of CAPTCHAs that we submitted to the services were intended to assess how fast workers could adapt to new CAPTCHA types. We exposed workers to a new type of CAPTCHA that is based on the Asirra CAPTCHA that was proposed in 2007. Asirra is a CAPTCHA based on identifying cats and dogs. Shown here is an example of the Asirra CAPTCHA (see right-hand image).

Using the corpus of labeled cats and dogs provided on the Asirra website, we fashioned a CAPTCHA that we could submit to the services. Since their APIs only generally allow uploading one image at a time, what we did was we took that labeled corpus and we generated this CAPTCHA (see image on the left). We put the instructions in the 4 most prevalent languages – this is one image, so we just montaged a bunch of pictures together and then submitted to each of these services.

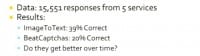

So, our results: we got back about 15,000 responses from five of these services. ImageToText already had proven adaptable to new CAPTCHAs and solved this CAPTCHA with appreciable accuracy, close to 40%. BeatCaptchas also seemed to do exceedingly well (see right-hand image).

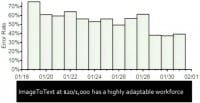

To assess how well ImageToText workers learn, we plotted their error rate over two weeks that we submitted those images. Shown here (see right-hand image) is the decrease in error rate for the ImageToText workers: as you can see, the error rate drops steadily throughout the week, eventually reaching 40%. Thus, ImageToText at $20/1000 has a highly adaptable workforce.

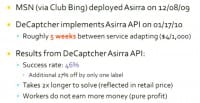

At approximately the same time that we deployed our custom version of the Asirra CAPTCHA, Decaptcher began offering an API specifically for Asirra. We were curious why, we didn’t think we influenced it, and we discovered that Microsoft had actually deployed Asirra on Club Bing on December 8, 2009. This is a screenshot from when they CAPTCHA’d you on that site (image on the left). Club Bing is a site where you go; you go there and play games, you earn their tickets, and then you can redeem those tickets for things like Xbox 360, Zunes, etc. So, you can see that there’s a good reason why you might want to abuse this service: you might want to get those products and resell them.

Decaptcher implemented the API on January 17, 2010, meaning that roughly 5 weeks elapsed before the service responded to this new CAPTCHA type. Using the API, we observed the success rate of 46% among the responses that we got back. An additional 27% were off by one label. We observed that it takes roughly twice as long to solve these CAPTCHAs, which has factored into the retail price: I’m sorry I didn’t mention this, but they charge you $4 for every 1000 CAPTCHA solves. Decaptcher generally charges you $2 per 1000 CAPTCHA solves. And their workers do not earn more money.

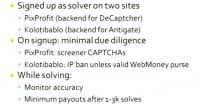

Now we’re going to take a look at the human solver backends to get a sense of the worker experiences. We signed up as a solver on two sites, knowing that they are the backends for several services included in our study. The first is PixProfit, which is the backend for DeCaptcher. This is an example of what the interface looks like for those workers (see upper part of the right-hand image). The second is Kolotibablo, which is the backend for Antigate. Here is an example of what that interface looks like (bottom part of the image).

What we did was we set up scripts to scrape the presented images and then pipe them back into the corresponding service. So, for example, we pulled out images from Kolotibablo and then used Antigate to solve them. There is minimal due diligence to sign up for these services. We realized later that PixProfit actually had about 30 or so screener CAPTCHAs that they first present you with. Kolotibablo threaten you with an IP ban unless you present them with a valid WebMoney purse. WebMoney is an e-currency, and that’s how they pay their workers. And they do actually do this IP ban.

As you solve CAPTCHAs, these services monitor your accuracy. I didn’t mention this, but these APIs also allow the customers to report when a worker has solved a CAPTCHA incorrectly. Additionally, you are not allowed to cash out until you solve anywhere between 1000 and 3000 CAPTCHAs.

Here’s a breakdown on the targeted sites (see right-hand image). We can see that the CAPTCHA distribution is highly skewed. The top 5 CAPTCHAs on PixProfit comprise 90% of the data, while the top 5 for Kolotibablo comprise ¾ of the data. Between the two, however, we see that Microsoft is highly targeted, which is probably the reason why they’re rotating their CAPTCHA so often. However, the remainder of the top 5 really differs by region. Kolotibablo, which is Russian, has a fair number of Russian sites in the top 5. PixProfit, on the other hand, has more of a Western flavor to it: Google, Yahoo, AOL.

So, we’ve done all this analysis at this point, and it’s time to step back and really ask ourselves: do CAPTCHAs work? In terms of differentiating between computers and humans – yes, our exploration of software solvers really seems to confirm this. However, do they prevent large-scale abuse? No, there’re a lot of tools out there that incorporate in human-based CAPTCHA solver services (see left-hand image).

We have shown that these services have a tremendous amount of capacity, are accurate and have good response times. Here’s a quote by Mr. “E”: he says that he solves on the order of about 100,000 CAPTCHAs daily. This means there’s over 100,000 daily instances of abuse taking place on a site: account sign-ups, spam emails sent, or forums postings made.

However, we don’t want you to draw the conclusion that CAPTCHAs are a failure – that’s a mistake (see image). Our work is intended to suggest that CAPTCHAs are an economic impediment and not necessarily a technical one. What they do is they add friction to an attacker’s business model; they’re valuable in weeding out those attackers whose business models are just not cost effective in the face of needing to bypass CAPTCHAs. After all, an attacker is going to have to expand some capital to handle the problem or the barrier of a CAPTCHA, and because of this the value of the resource that’s being abused has to be much greater than the value of the CAPTCHA.

Furthermore, CAPTCHAs are a very low-impact defense mechanism, well, not very, but legitimate users remain willing to deal with them, and CAPTCHAs are certainly serviceable as the first line of defense. CAPTCHAs can be used in conjunction with a stronger defense mechanism, like SMS messages, should a user exhibit suspicious behaviors.

So, in our work we’ve evaluated CAPTCHAs from an economic perspective, and we have shown that human solver services are mature and cost effective. We hope that in the future people will give more consideration to evaluating defense mechanisms from both technical and economic perspectives.

Question: You said that one of your goals when you were doing this research was maybe to figure out something about the workforce that you can take advantage of, to maybe make CAPTCHAs more effective. So, is there anything that you can say about what you’ve learned towards that goal?

Answer: Well, you might want to think about making a culturally sensitive CAPTCHA, one that perhaps people from the United States might know an answer to. And we’ve actually seen that deployed in practice. And actually, to sign up for some of these services, for example, you need to be able to read Russian and answer a specific question about who the Prime Minister of Russia is. So you might envision a solution that takes that into account, because if you make it so that the workers really have to understand something unique to a particular region, like the United States, for example, then that can probably weed out a lot of these workers that are in low-cost labor markets.

Answer: Well, you might want to think about making a culturally sensitive CAPTCHA, one that perhaps people from the United States might know an answer to. And we’ve actually seen that deployed in practice. And actually, to sign up for some of these services, for example, you need to be able to read Russian and answer a specific question about who the Prime Minister of Russia is. So you might envision a solution that takes that into account, because if you make it so that the workers really have to understand something unique to a particular region, like the United States, for example, then that can probably weed out a lot of these workers that are in low-cost labor markets.

Question: Could you say anything more about the Klingon example? How did they get 1%? Was that just random chance or do you think you got lucky or unlucky and that the person actually knew Klingon?

Answer: I’d be amazed if they actually knew Klingon, but I actually think that they have a fairly small workforce, and if you saw the CAPTCHA examples that I presented, we also had one example number. So it’s possible that they saw a bulk number of these Klingon CAPTCHAs, and that same worker was like: “You know, I recognize that character now,” because we sent this 222 times. If that same worker has seen the same CAPTCHA over and over and has the example, he might remember the example built into the CAPTCHA. We don’t repeat digits in the same CAPTCHA, but it’s possible that they just learned. We taught them something, maybe.

Question: For the CAPTCHA that was based on dogs and cats – it seemed like the error rates were different when you deployed it and then when it was actually exposed as an API. Do you think that once they were exposing as an API, they actually go through and train their workers to solve these types of things?

Answer: Nowadays, I think PixProfit actually closed down the registration, but when you actually go through their 30 training examples, now they’re actually training examples, they’ll give you an Asirra CAPTCHA – you have to select these radio buttons, and they actually tell you whether you got it right or wrong. You’re not actually allowed to proceed past that CAPTCHA or not allowed to get admitted into the system until you’ve actually solved that CAPTCHA correctly.

Answer: Nowadays, I think PixProfit actually closed down the registration, but when you actually go through their 30 training examples, now they’re actually training examples, they’ll give you an Asirra CAPTCHA – you have to select these radio buttons, and they actually tell you whether you got it right or wrong. You’re not actually allowed to proceed past that CAPTCHA or not allowed to get admitted into the system until you’ve actually solved that CAPTCHA correctly.

Question: Do you think there’s room for arbitrage in this market, where you plug one guy into another like you were talking about? And sort of more generally, do you think there are economic ways that you can make these systems unprofitable for people running them?

Answer: In response to the second question, I guess it’s possible, because, for example, I saw this recently: I was getting CAPTCHA on the backend when I was solving CAPTCHAs, and they were just blank. And so, what would end up happening was that my account would get banned, because I can’t solve anything, right? But you’re also allowed to specify that the CAPTCHA has numbers in it, so you keep trying to submit this CAPTCHA and you keep getting it wrong. So that’s one way that you might maliciously affect these backend services. The first question – I suppose you could. Like ImageToText, maybe they’re selling some of Decaptcher’s or Antigate’s capabilities. I mean, that seems to make a lot of sense, right? I start up a website and all I do is charge you a little bit more, and then I just use one of these services to actually solve them. That makes a whole lot of sense.

Question: I was just wondering if when you signed up for these services to solve these CAPTCHAs, if you were actually getting paid; do these unscrupulous businesses remain true to their word in paying you?

Answer: I don’t think I was actually legally allowed to pull out the money from their accounts. I didn’t try. I’ve read online on many different forums that they’re legitimate and they do pay out the money.